Patriot Viper III Review: 2x4 GB at DDR3-2400 C10-12-12 1.65 V

by Ian Cutress on November 18, 2013 1:00 PM ESTCPU Compute

One side I like to exploit on CPUs is the ability to compute and whether a variety of mathematical loads can stress the system in a way that real-world usage might not. For these benchmarks we are ones developed for testing MP servers and workstation systems back in early 2013, such as grid solvers and Brownian motion code. Please head over to the first of such reviews where the mathematics and small snippets of code are available.

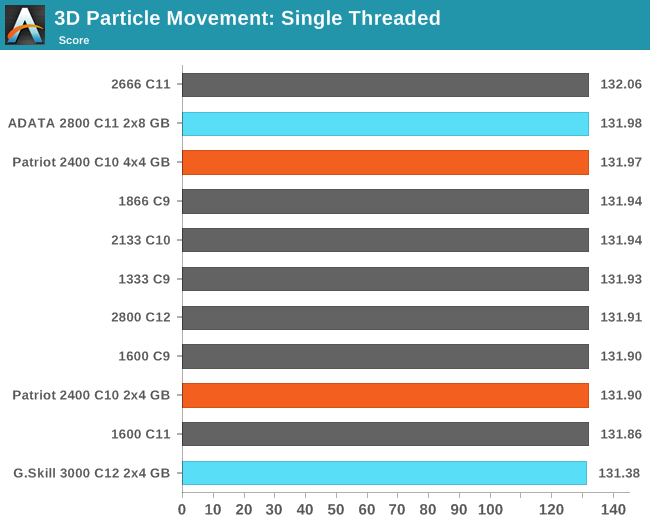

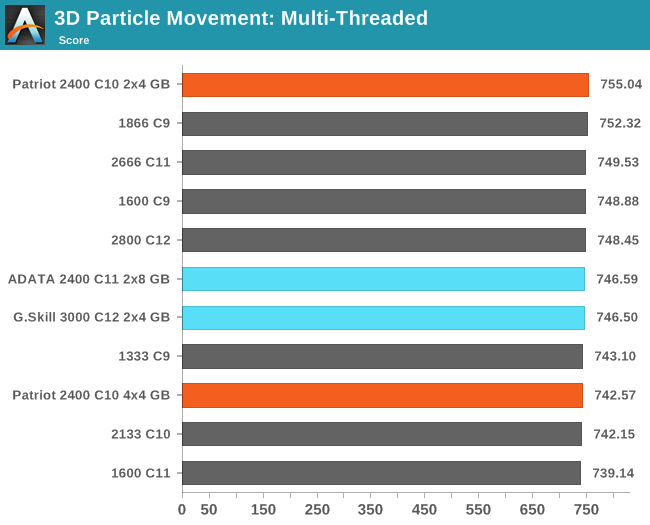

3D Movement Algorithm Test

The algorithms in 3DPM employ uniform random number generation or normal distribution random number generation, and vary in various amounts of trigonometric operations, conditional statements, generation and rejection, fused operations, etc. The benchmark runs through six algorithms for a specified number of particles and steps, and calculates the speed of each algorithm, then sums them all for a final score. This is an example of a real world situation that a computational scientist may find themselves in, rather than a pure synthetic benchmark. The benchmark is also parallel between particles simulated, and we test the single thread performance as well as the multi-threaded performance. Results are expressed in millions of particles moved per second, and a higher number is better.

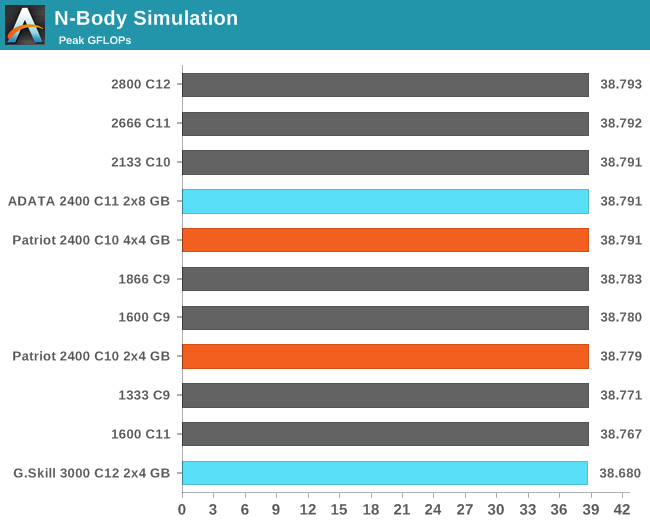

N-Body Simulation

When a series of heavy mass elements are in space, they interact with each other through the force of gravity. Thus when a star cluster forms, the interaction of every large mass with every other large mass defines the speed at which these elements approach each other. When dealing with millions and billions of stars on such a large scale, the movement of each of these stars can be simulated through the physical theorems that describe the interactions. The benchmark detects whether the processor is SSE2 or SSE4 capable, and implements the relative code. We run a simulation of 10240 particles of equal mass - the output for this code is in terms of GFLOPs, and the result recorded was the peak GFLOPs value.

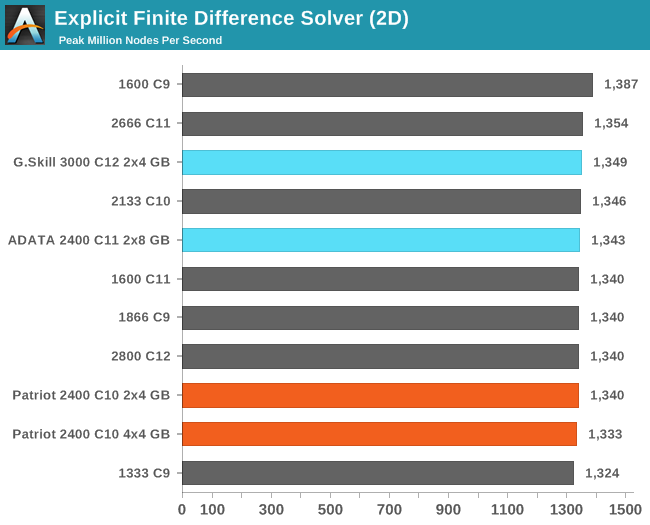

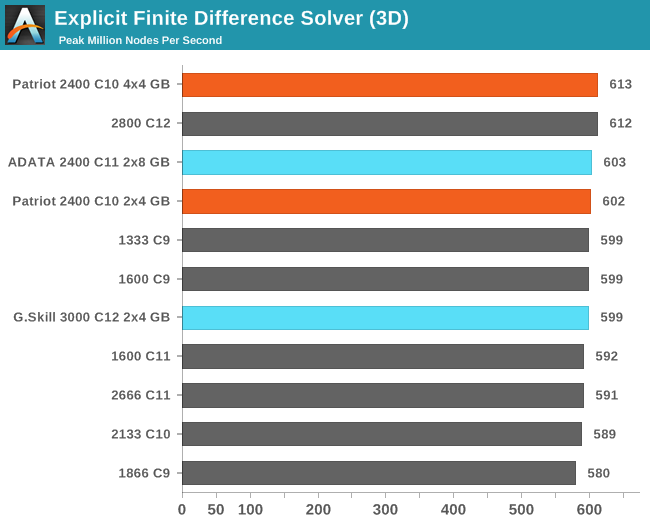

Grid Solvers - Explicit Finite Difference

For any grid of regular nodes, the simplest way to calculate the next time step is to use the values of those around it. This makes for easy mathematics and parallel simulation, as each node calculated is only dependent on the previous time step, not the nodes around it on the current calculated time step. By choosing a regular grid, we reduce the levels of memory access required for irregular grids. We test both 2D and 3D explicit finite difference simulations with 2n nodes in each dimension, using OpenMP as the threading operator in single precision. The grid is isotropic and the boundary conditions are sinks. We iterate through a series of grid sizes, and results are shown in terms of ‘million nodes per second’ where the peak value is given in the results – higher is better.

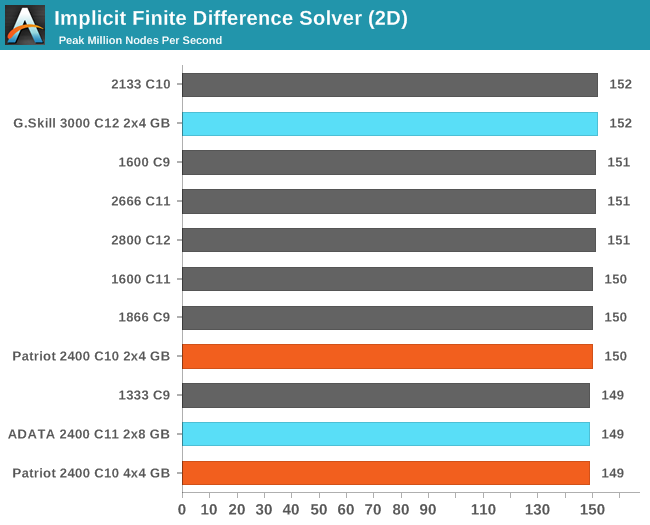

Grid Solvers - Implicit Finite Difference + Alternating Direction Implicit Method

The implicit method takes a different approach to the explicit method – instead of considering one unknown in the new time step to be calculated from known elements in the previous time step, we consider that an old point can influence several new points by way of simultaneous equations. This adds to the complexity of the simulation – the grid of nodes is solved as a series of rows and columns rather than points, reducing the parallel nature of the simulation by a dimension and drastically increasing the memory requirements of each thread. The upside, as noted above, is the less stringent stability rules related to time steps and grid spacing. For this we simulate a 2D grid of 2n nodes in each dimension, using OpenMP in single precision. Again our grid is isotropic with the boundaries acting as sinks. We iterate through a series of grid sizes, and results are shown in terms of ‘million nodes per second’ where the peak value is given in the results – higher is better.

48 Comments

View All Comments

jabber - Tuesday, November 19, 2013 - link

Indeed, or for those of us that found girls, moved out, got older, changed hobbies, just realised that running benchmarks all day is a waste of life or found that actually the world doesn't end if you don't upgrade your PC every 6-12 months.There is a need for some sites that analyse how the current $60-$200 GPUs compare with those of 5 years ago, same for CPUs etc. Big market for that kind of info but unfortunately all we get is "this sites for enthusiasts noob!" well thanks but I'm still an enthusiast but now I have a mortgage or I'm only earning half what I was 5 years ago.

The info I get from Anandtech I can get anywhere......

Shadowmaster625 - Tuesday, November 19, 2013 - link

In the conclusion you should add one bar to one of your charts... a bar where the RAM is at 1600 but the cpu is clocked just 100MHz higher, to really highlight how little impact memory speeds have on performance compared to even a tiny cpu clock speed boost.jabber - Tuesday, November 19, 2013 - link

Buy whatever matches best with your motherboard and GPU colour scheme I say.ShieTar - Wednesday, November 20, 2013 - link

Of course. And never overclock your memory when there is a full moon.D1RTYD1Z619 - Tuesday, November 26, 2013 - link

or if you have pickles in your fridge.rmh26 - Tuesday, November 19, 2013 - link

Ian can you give a little more information about the size of your CPU computer benchmarks specifically the grid size on the finite difference problems. In my experience memory bandwidth plays a large role in the speed of the computation. There are many HPC applications that have memory as the bottleneck and I'm wondering if your problem size is small enough that it is being efficiently handled by the cache and the ram speed isn't making much of an influence. In know in my own CFD code going from 1600 to 1866 showed an almost linear speed up.UltraWide - Tuesday, November 19, 2013 - link

Did you have a chance to remove the heat-spreaders to see which ICs are in these? I am assuming Hynix MFR?Gen-An - Tuesday, November 19, 2013 - link

Hynix DFR actually. 2Gbit ICs (256MB each) so the same size as CFR but with nowhere near the overclockability.