The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM ESTFreeSync Features

In many ways FreeSync and G-SYNC are comparable. Both refresh the display as soon as a new frame is available, at least within their normal range of refresh rates. There are differences in how this is accomplished, however.

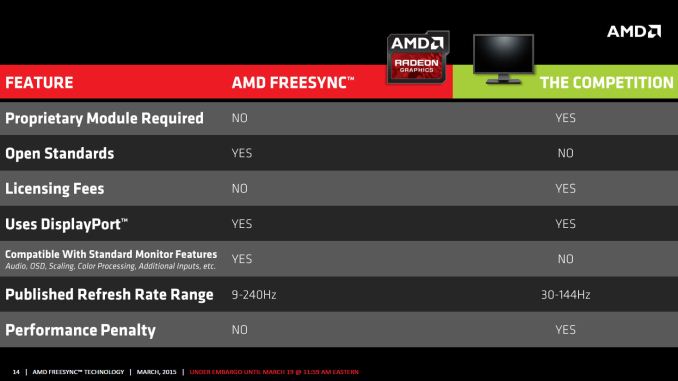

G-SYNC uses a proprietary module that replaces the normal scaler hardware in a display. Besides cost factors, this means that any company looking to make a G-SYNC display has to buy that module from NVIDIA. Of course the reason NVIDIA went with a proprietary module was because adaptive sync didn’t exist when they started working on G-SYNC, so they had to create their own protocol. Basically, the G-SYNC module controls all the regular core features of the display like the OSD, but it’s not as full featured as a “normal” scaler.

In contrast, as part of the DisplayPort 1.2a standard, Adaptive Sync (which is what AMD uses to enable FreeSync) will likely become part of many future displays. The major scaler companies (Realtek, Novatek, and MStar) have all announced support for Adaptive Sync, and it appears most of the changes required to support the standard could be accomplished via firmware updates. That means even if a display vendor doesn’t have a vested interest in making a FreeSync branded display, we could see future displays that still work with FreeSync.

Having FreeSync integrated into most scalers has other benefits as well. All the normal OSD controls are available, and the displays can support multiple inputs – though FreeSync of course requires the use of DisplayPort as Adaptive Sync doesn’t work with DVI, HDMI, or VGA (DSUB). AMD mentions in one of their slides that G-SYNC also lacks support for audio input over DisplayPort, and there’s mention of color processing as well, though this is somewhat misleading. NVIDIA's G-SYNC module supports color LUTs (Look Up Tables), but they don't support multiple color options like the "Warm, Cool, Movie, User, etc." modes that many displays have; NVIDIA states that the focus is on properly producing sRGB content, and so far the G-SYNC displays we've looked at have done quite well in this regard. We’ll look at the “Performance Penalty” aspect as well on the next page.

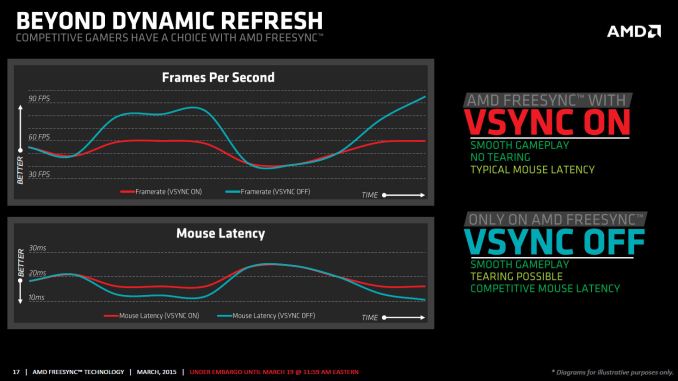

One other feature that differentiates FreeSync from G-SYNC is how things are handled when the frame rate is outside of the dynamic refresh range. With G-SYNC enabled, the system will behave as though VSYNC is enabled when frame rates are either above or below the dynamic range; NVIDIA's goal was to have no tearing, ever. That means if you drop below 30FPS, you can get the stutter associated with VSYNC while going above 60Hz/144Hz (depending on the display) is not possible – the frame rate is capped. Admittedly, neither situation is a huge problem, but AMD provides an alternative with FreeSync.

Instead of always behaving as though VSYNC is on, FreeSync can revert to either VSYNC off or VSYNC on behavior if your frame rates are too high/low. With VSYNC off, you could still get image tearing but at higher frame rates there would be a reduction in input latency. Again, this isn't necessarily a big flaw with G-SYNC – and I’d assume NVIDIA could probably rework the drivers to change the behavior if needed – but having choice is never a bad thing.

There’s another aspect to consider with FreeSync that might be interesting: as an open standard, it could potentially find its way into notebooks sooner than G-SYNC. We have yet to see any shipping G-SYNC enabled laptops, and it’s unlikely most notebooks manufacturers would be willing to pay $200 or even $100 extra to get a G-SYNC module into a notebook, and there's the question of power requirements. Then again, earlier this year there was an inadvertent leak of some alpha drivers that allowed G-SYNC to function on the ASUS G751j notebook without a G-SYNC module, so it’s clear NVIDIA is investigating other options.

While NVIDIA may do G-SYNC without a module for notebooks, there are still other questions. With many notebooks using a form of dynamic switchable graphics (Optimus and Enduro), support for Adaptive Sync by the Intel processor graphics could certainly help. NVIDIA might work with Intel to make G-SYNC work (though it’s worth pointing out that the ASUS G751 doesn’t support Optimus so it’s not a problem with that notebook), and AMD might be able to convince Intel to adopt DP Adaptive Sync, but to date neither has happened. There’s no clear direction yet but there’s definitely a market for adaptive refresh in laptops, as many are unable to reach 60+ FPS at high quality settings.

350 Comments

View All Comments

ggg000 - Thursday, March 26, 2015 - link

Freesync is a joke:https://www.youtube.com/watch?feature=player_embed...

https://www.youtube.com/watch?v=VJ-Pc0iQgfk&fe...

https://www.youtube.com/watch?v=1jqimZLUk-c&fe...

https://www.youtube.com/watch?feature=player_embed...

https://www.youtube.com/watch?v=84G9MD4ra8M&fe...

https://www.youtube.com/watch?v=aTJ_6MFOEm4&fe...

https://www.youtube.com/watch?v=HZtUttA5Q_w&fe...

ghosting like hell.

Mangolao - Friday, May 22, 2015 - link

Heard free sync still have issue..... on TFT central ; the G2G is actually at 8.5ms instead of 1ms when connected with free sync on DP.... http://www.tftcentral.co.uk/reviews/benq_xl2730z.h...cgalyon - Thursday, March 19, 2015 - link

Good review, helps clear up a lot with respect to these new features. I've long thought that achieving a sufficiently high FPS and refresh rate would take care of things, but it's not always possible to do that with how games have pushed the limits of card abilities.I'm still on the fence about whether or not I should upgrade my monitor. These days I do a lot of my gaming on my TV by running it through my AV receiver. However, there are some games (like Civilization V) that just don't translate well to a couch-based experience.

Owls - Thursday, March 19, 2015 - link

So gysync is overhyped garbage? Who would have thought?invinciblegod - Thursday, March 19, 2015 - link

Based on that statement, freesync is also garbage.LancerVI - Thursday, March 19, 2015 - link

I wouldn't say gsync was garbage. I would say gsync was DRM. Expensive DRM at that.FriendlyUser - Thursday, March 19, 2015 - link

At least it's not overhyped and costs less.fatpenguin - Thursday, March 19, 2015 - link

What's Intel's plan? Will they be supporting this in the future?duploxxx - Thursday, March 19, 2015 - link

Intel will bring another version: INTnosync, since the iGPU is not capable of running anything at decent FPS on the display res shipping today.....MikeMurphy - Thursday, March 19, 2015 - link

Which is precisely why Intel will benefit most from adaptive sync.